Breaking the Chains of Probability: Neutrosophic Logic as a New Framework for Epistemic Uncertainty in Large Language Models

Keywords:

neutrosophic logic; large language models; epistemic uncertainty; hyper-truth; uncertainty quantification; indeterminacy; ethical AI; plithogenic structureAbstract

Large Language Models (LLMs) are predominantly governed by probabilistic frameworks

in which the sum of outcome probabilities is constrained to unity. This limitation, often imposed by

Softmax layers, leads to a collapse of uncertainty that conflates ignorance, paradox, and vagueness.

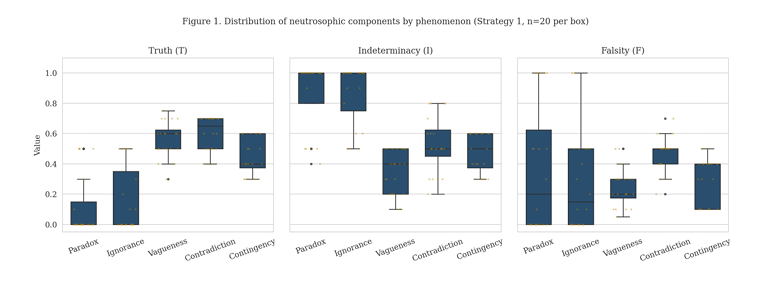

We present an empirical investigation of Neutrosophic Logic, in which Truth (T), Indeterminacy (I),

and Falsity (F) are three independent dimensions on [0, 1], applied to elicit declared epistemic states

from LLMs. Across 300 API calls — including 100 valid unconstrained neutrosophic evaluations —

on four OpenAI GPT models and five linguistic phenomena (five repetitions per cell), the

neutrosophic strategy yields hyper-truth (T + I + F > 1) in 66.0% of Strategy-1 evaluations, with the

highest rates observed in ethical contradiction (95%) and future contingency (70%). A Pearson chi

square test of phenomenon × hyper-truth association is significant (chi-square = 11.32, df = 4, p =

0.023). Mason (2026) independently replicated and extended an earlier release of this work across

five additional model families from five different vendors, reporting hyper-truth in 84% of

unconstrained evaluations. We do not claim that hyper-truth is an intrinsic latent variable inside the

model; rather, that unconstrained neutrosophic prompting elicits declared epistemic states that

probabilistic prompting structurally suppresses by Proposition 1.

Downloads

Downloads

Published

Issue

Section

License

Copyright (c) 2026 Neutrosophic Sets and Systems

This work is licensed under a Creative Commons Attribution 4.0 International License.